A necessary disclosure: some of the links in this article contain our affiliate information. It means that if you follow such links and order a service from the company, we may receive a small commission from them for sending them a customer (you). Keep in mind, however, that we don’t give the recommendations just because of the commission, we only recommend our partners because they are extremely good at what they do: we use their services ourselves!

If you have a WordPress-powered web site and you want to finally jump on that CDN bandwagon everyone is talking about, it’s very easy to set up.

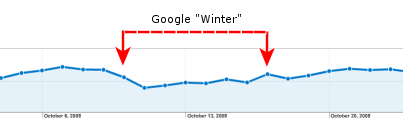

If you lived under a rock during the last few years and don’t know why would you need a CDN (Content Delivery Network), here is an elevator pitch: the CDN lets you speed up the delivery of the static files (such as the images, CSS, JavaScript) and make your web site respond faster. And a faster web site is good not only for your human visitors, but also for the SEO (the faster your web site, the better it will rank in the Google search results.)

One of the more popular CDN providers is MaxCDN, which we’ve been using for our primary web site WinAbility.com for about a year now, and are very happy about. It offers a control panel that you can use to set everything up manually, but if your web site is running on WordPress, there is a WordPress plugin that does all the necessary configuration for you. Here is what to do, step, by step:

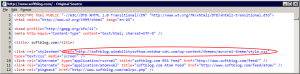

0. (Optional) If you are familiar with HTML and want to see exactly what CDN would do to the HTML code of your web pages, you may want to save the page source of your main page, to be able to compare it with the new code after enabling the CDN. Here is how the HTML source of this web site (softblog.com) looked like before we have enabled the CDN for it:

1. Open an account with MaxCDN. It’s easy and free, and they offer a free trial. There is also a 25% off offer for the new customers, if you want to take advantage of that.

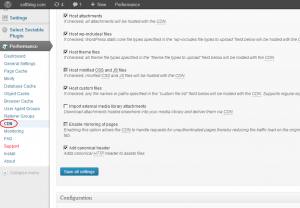

2. Install the W3 Total Cache plugin into your WordPress site. After you activate it, it will add some speed to your site out of the box, and one of the options it offers on its menu is the CDN integration:

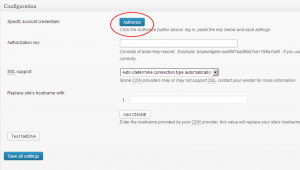

3. Select CDN from the menu on the left, and Total Cache should ask you to autorize access to your MaxCDN account:

Press the Authorize button, and you will be taken directly to a special web page at MaxCDN with your authorization key pre-formatted. Copy it, go back to your WordPress admin panel, and paste it there. Press the Save all settings button, and on to the final step:

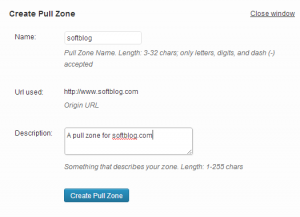

4. Create a pull zone (by pressing the Create pull zone button, of course):

What is a pull zone, you might ask? Think of it as a special CDN service that pulls files from your main server and delivers them to the end user when the user is opening your web page. MaxCDN also supports the push zones, which work in about the same way as the FTP servers, but we won’t use them here for now.

Anyway, for this web site (softblog.com) I have entered softblog as the name for the pull zone, to distinguish it from other pull zones I have previously created for other web sites. After the zone is created, make sure it is selected on the CDN page of the Total Cache plugin, as shown above. Press Save all settings button once again, and your CDN is now set up!

If something is wrong, look at the top of your WordPress admin page: Total Cache is good about detecting problems and telling you exactly what to do to fix them. If no problems are reported, congratulations, all done, your WordPress site now uses MaxCDN!

5. (Optional) Look at the page source of your web site again; ours has the following:

Compare it with the page source you obtained before enabling MaxCDN, see the difference? The CSS file is now served from the CDN pull zone. The same should be true for other static files and images. From now on, if someone from the other half of the globe opens your web page, they don’t need to wait for the static files to travel all around the world to reach them, MaxCDN will deliver the files to the user’s browser from the closest location.

Happy word pressing!

February 17th, 2007 at 10:10 pm

I believe that this is because there is an NTFS fork on the directory that says that anything in that directory shouldn’t be trusted. This is similar to XP in how it knows that a file was downloaded recently by IE.

February 19th, 2007 at 6:06 pm

Hi Andrei,

Have a quick question about TweakUAC. Can I suppress UAC messages only for a single application using TWeak? Or does it suppress all UAC messages, system wide?

Thanks.

Regards,

Soumitra

Hi Soumitra,

> Can I suppress UAC messages only for a single application using TWeak?

No, it’s impossible.

> Or does it suppress all UAC messages, system wide?

Yes, that’s how it works.

Andrei.

February 20th, 2007 at 11:22 am

Hi Myria,

> I believe that this is because there is an NTFS fork on the directory that says that anything in that directory shouldn’t be trusted. This is similar to XP in how it knows that a file was downloaded recently by IE.

It may very well be so, but it does not make it any less of a bug. If a file contains a valid digital signature, Windows should not misrepresent it as coming from an unidentified publisher.

Andrei.

February 26th, 2007 at 1:09 pm

How did you take a screenshot of the UAC? I can’t get Print Screen to copy it to the clipboard, and the snipping tool isn’t working either.

February 26th, 2007 at 9:55 pm

Hi Chris,

> How did you take a screenshot of the UAC? I can’t get Print Screen to copy it to the clipboard, and the snipping tool isn’t working either.

Those tools don’t work because UAC displays its messages on the secure desktop, to which the “normal” user tools have no access. To solve this problem, I’ve changed the local security policy to make the UAC prompts to appear on the user’s desktop. After that, I used the regular Print Screen key to capture the screenshots.

Hope this helps,

Andrei.

March 4th, 2007 at 2:58 am

Hi Andrei,

I sell software to a *very* non-technical customer base. My setup procedure includes installation of an .ocx file into the \windows\system32 folder and registration of it using regsvr32. In order to copy anything into the \windows\system32 folder under Vista I have to turn off UAC. I would like to be able to do this automatically, programmatically, so I don’t have to make my users mess with UAC. I’d like to be able to turn off UAC for a second or two programmatically, then turn it back on. Will your software enable me to do that?

Thanks

March 4th, 2007 at 11:42 am

Hi Matthew,

> I’d like to be able to turn off UAC for a second or two programmatically, then turn it back on.

Unfortunately it’s impossible: if you enable or disable the UAC, Windows must be restarted before the change would have take effect.

To solve your problem:

> In order to copy anything into the \windows\system32 folder under Vista I have to turn off UAC.

It looks like your setup process is executing non-elevated, that’s why it cannot do that. You may want to try to start it elevated and see if it would have solved the problem without turning off the UAC.

HTH

Andrei.

March 25th, 2007 at 10:24 am

don’t use TweakUAC because this program makes your Vista unsafe!

March 29th, 2007 at 10:30 pm

> How are you supposed to make the decision whether to trust a certain program or not if UAC does not provide you with the correct information? (Nevermind, it’s a rhetorical question).

The answer is you are not. A guest should not be allowed to make any decision about installing software. If you log on as a valid user, the prompt works just fine. If you log on as a guest, you shouldn’t be installing software, so any dire warning is fine.

Yes, this might be unintended behavior (or perhaps it is not), but its impact is null.

March 31st, 2007 at 4:47 pm

Hi Herbys, you wrote:

> The answer is you are not. A guest should not be allowed to make any decision about installing software.

Sorry, but you are missing the point: the UAC displays this information for the _administrator_ to use and to make a decision, not for the guest user. The administrator is supposed to review the information and enter his or her password to approve the action. Take a look at the screenshot and see for yourself.

> If you log on as a guest, you shouldn’t be installing software

Why shouldn’t I? What if I want to install a program for use by the guests only? For example, I use only one web browser (IE), but I never know what browser a guest may want to use. So, being a good host I want to install also Firefox and Opera, but I don’t want them to clutter my desktop, etc., I want them to be used by the guests only. To achieve that, I would log in to the guest account and install the additional browsers from there.

I want to install also Firefox and Opera, but I don’t want them to clutter my desktop, etc., I want them to be used by the guests only. To achieve that, I would log in to the guest account and install the additional browsers from there.

> so any dire warning is fine.

Wrong.

> Yes, this might be unintended behavior (or perhaps it is not), but its impact is null.

May be, may be not. In any case, it does not make it any less of a bug!

April 10th, 2007 at 9:28 pm

Is there any plan to adapt your program into a Control Panel Applet? I think that would be very clever.

April 11th, 2007 at 9:07 am

Hi Timothy, you wrote:

> Is there any plan to adapt your program into a Control Panel Applet?

No, we don’t have such plans at this time, sorry.

June 18th, 2007 at 9:19 pm

Anyone know why Vista won’t let me rename any new folder?

The permissions are all checked for me as administrator, still I get an error message, “folder does not exist”. I can put things in folder and move it, but can’t rename it?

January 29th, 2008 at 9:46 am

To be honest, I have always thought that digitally signing was merely a way of generating more revenue. It doesn’t offer you any more security and windows will always moan at you regardless of an application having a signature or not.

Even if your application has the “all powerful” and completely unnecessary Windows Logo certification, it still offers nothing to you as a user other than the reassurance that the person/s developing the software has allot of spare cash.

February 24th, 2008 at 10:34 am

Bob Said:

> To be honest, I have always thought that digitally signing was merely a way of generating more revenue.

I have to agree. I’ve heard the argument of how it’s all designed to protect users from malicious software, and that’s all well and good as far as that goes — but since Vista, and most mobile OSes, don’t offer a way for users to say “okay, I understand the risk, I accept full responsibility, please go ahead and run this unsigned application without restrictions, and never bother me again when I try to run this application”… That makes it pretty clear it’s just a racket initiated by VeriSign and the like, and happily endorsed by Microsoft.

May 17th, 2008 at 9:03 am

This is not a bug.

The first screen shot shows that Windows doesn’t trust the identity contained in the certificate. In other words, “I can read this, but I don’t know if I should trust the person who wrote it.”

The second screen shot just shows that the certificate is well-formed, that Windows can understand the information contained within it. It says nothing about what Windows will do with that information.

Who did you did you pay to sign the certificate for you? If they’re not someone with a well-established reputation, then I don’t WANT my computer to automatically trust them.

It’s just like how web browsers automatically trust SSL certificates signed by Thawte or Verisign, but will ask you before accepting a certificate from Andy’s Shady Overnight Certificate Company. As always, it’s a balancing act between usability and security.

May 17th, 2008 at 10:04 am

Andrew, you wrote:

> This is not a bug.

OK, there is a fine line between a bug and a feature, let’s assume for a moment that it’s a feature rather than a bug. If so, what benefit is this feature supposed to provide? As the second screen shows, the file is digitally signed, and Vista can detect that. Yet, it shows the publisher as “unidentified” on the first screen. Note also (as I mentioned in the post), that if you move the file to one a few specific folders (such as C:/Program files), Vista would magically begin to recognize the publisher. Move the file to some other folder, and it’s unidentified again.

If you can explain why they designed it that way, I would agree with you. Until then, it’s a bug. Guilty until proven innocent!

> Who did you pay to sign the certificate for you?

That particular file was signed with a Verisign certificate, but the same problem occurs with _any_ file, signed with _any_ certificate. Try it yourself and you will see.

June 1st, 2008 at 12:53 pm

I think that it occurs because of IE7 protected mode – see http://victor-youngun.blogspot.com/2008/03/internet-explorer-7-protected-mode-vs.html, it’s a guide to run firefox in protected mode, and this explains very good how the protected mode works… the prompt is because the “Download” Folder is a protected folder (level “low”) and I think it only displays at the guest, because Windows forces UAC to display certificate in normal user mode in “low” level folders, but NOT for the MUCH MORE RESTRICTED “guest” account. This would be my explanaition.

It doesn’t mean that I like it how Microsoft handels this but this would eventually explain WHY the warning appears sometimes and sometimes not.

June 1st, 2008 at 12:55 pm

sorry, in my last post there is a comma in the link, the correct link is:

http://victor-youngun.blogspot.com/2008/03/internet-explorer-7-protected-mode-vs.html

June 3rd, 2008 at 7:16 pm

Farthen: thank you for the information and explanation!